Having been gathered information from offices using Amazon Web Services (AWS), we have come to know a lot about the reason it is being celebrated in the corporate circles nowadays. However, with this knowledge gathering that we have done for some time now, we fairly have an idea about what’s not so good about it. What we have managed to do is come up with a high-availability, high- performance system, which is slightly different than what Amazon advices.

Let’s look at two related things:

1. For folks who are trying to get their hands on AWS, or want to know more about it. We thought we will share some benefits and challenges having encountered them ourselves.

2. For those who are already using AWS, we know that the priority is always the uptime. Therefore, we thought of sharing some best practices for running a high-performance service.

It would be fair, and not over-enthusiastic to say that AWS has bought a revolution in running a technology start up in terms of sheer economics. Nobody notices how many companies are using Amazon’s Elastic Compute Cloud (EC2) somewhere in their heap until it has an outage, and unexpectedly it seems like half the Internet goes away. But it’s not like Amazon managed to do it by fluke: they have an awe-inspiring product. Everyone uses AWS because EC2 has drastically simplified running software, by enormously lowering the amount you need to know about hardware in order to do so, and the amount of moolah you need to get started.

EC2 is a modern method of running software

The primary and the most essential thing to know about EC2 is that it is not “just” a virtualized hosting service. Another way of thinking about it is like employing a network administrator and a fractional system: instead of retaining one very expensive resource to do a whole lot of automation, you choose to pay a little bit more for every box owned by you, and you have whole classes of problems distracted away. Network topology and power, vendor differences and hardware costs, network storage systems — are factors one had to give a thought about back in 2000-2004. However, with AWS you do not have to pay a heed about it, or at least not till you become a mammoth.

By far, the biggest reason and advantage of using EC2 is its suppleness. We can swirl up a new box very, very rapidly — about 5 minutes from thinking “I think I need some hardware” to logging for the first time, and being ready to go.

This allows us do some things that just a few years ago would have been crazy, for example:

• we can incorporate major advancements on new hardware. When we have a large upgrade, we spin up completely new hardware and make all the configs and dependencies right, then just prioritize it into our load balancer — reversing is as easy as resetting the load balancer, and moving forward with the new system means just shutting down the old boxes. Running double as much hardware as you require, but for just 24 hours, is simple and cheap.

• Our downtime plan for few non-critical systems, where possibly up to an hour of infrequent downtime is acceptable, to monitor the box, and if it faulters, make a new box and reestablish the system with backups.

• we can ramp up in response to event loads, comparatively than in advance of them: when your monitoring senses high load, you can spin up supplementary capacity, and it can be completed in time to handle the current load event — not the one after.

• we can not be anxious about pre-launch capacity calculations: we spin up what looks at a gut level to be sufficient hardware, launch, and then if we discover we’ve got it wrong, spin boxes up or down as required. This kind of repetition at the hardware level is one of the greatest component of AWS, and is only probable because they can provision (and de-provision) instances near-instantly.

But EC2 has some problems

While we admire EC2 and couldn’t have got where we are without it, it’s important to be honest that not everything is sunshine and roses. EC2 has serious performance and reliability limitations that it’s important to be aware of, and build into your planning.

First and foremost is their whole-zone failure pattern. AWS has multiple locations worldwide, known as “availability regions”. Within those regions, machines are divided into what is known as “availability zones”: these are physically co-located, but (in theory) isolated from each other in terms of networking, power, etc..

There’s a few important things we’ve learned about this region-zone pattern:

Virtual hardware doesn’t last as long as real hardware. Our average observed lifetime for a virtual machine on EC2 over the last 3 years has been about 200 days. After that, the chances of it being “retired” rise hugely. And Amazon’s “retirement” process is unpredictable: sometime they’ll notify you ten days in advance that a box is going to be shut down; sometimes the retirement notification email arrives 2 hours after the box has already failed. Rapidly-failing hardware is not too big a deal — it’s easy to spin up fresh hardware, after all — but it’s important to be aware of it, and invest in deployment automation early, to limit the amount of time you need to burn replacing boxes all the time.

You need to be in more than one zone, and redundant across zones. It’s been our experience that you are more likely to lose an entire zone than to lose an individual box. So when you’re planning failure scenarios, having a master and a slave in the same zone is as useless as having no slave at all — if you’ve lost the master, it’s probably because that zone is unavailable. And if your system has a single point of failure, your replacement plan cannot rely on being able to retrieve backups or configuration information from the “dead” box — if the zone is unavailable, you won’t be able to even see the box, far less retrieve data.

Multi-zone failures happen, so if you can afford it, go multi-region too. US-east, the most popular (because oldest and cheapest) AWS region, had region-wide failures in June 2012, in March 2012, and most spectacularly in April 2011, which was nicknamed cloudpocalypse. Our take on this — and we’re probably making no friends at AWS saying so — is that AWS region-wide instability seem to frequently have the same root cause, which brings me to our next point.

To maintain high uptime, we have stopped trusting EBS

This is where we differ sharply from Amazon’s marketing and best-practices advice. Elastic Block Store (EBS) is fundamental to the way AWS expects you to use EC2: it wants you to host all your data on EBS volumes, and when instances fail, you can switch the EBS volume over to the new hardware, in no time and with no fuss. It wants you to use EBS snapshots for database backup and restoration. It wants you to host the operating system itself on EBS, known as “EBS-backed instances“. In our admittedly anecdotal experience, EBS presented us with several major challenges:

I/O rates on EBS volumes are poor: I/O rates on virtualized hardware will necessarily suck relative to bare metal, but in our experience EBS has been significantly worse than local drives on the virtual host (what Amazon calls “ephemeral storage”). EBS volumes are essentially network drives, and have all the performance you would expect of a network drive — i.e. not great. AWS have attempted to address this with provisioned IOPS, which are essentially higher-performance EBS volumes, but they’re expensive enough to be an unattractive trade-off.

EBS fails at the region level, not on a per-volume basis. In our experience, EBS has had two modes of behaviour: all volumes operational, or all volumes unavailable. Of the three region-wide EC2 failures in us-east that I mentioned earlier, two were related to EBS issues cascading out of one zone into the others. If your disaster recovery plan relies on moving EBS volume around, but the downtime is due to an EBS failure, you’ll be hosed. We were bitten this way a number of times.

The failure mode of EBS on Ubuntu is extremely severe: because EBS volumes are network drives masquerading as block devices, they break abstractions in the Linux operating system. This has led to really terrible failure scenarios for us, where a failing EBS volume causes an entire box to lock up, leaving it inaccessible and affecting even operations that don’t have any direct requirement of disk activity.

For these reasons, and our strong focus on uptime, we abandoned EBS entirely, starting about six months ago, at some considerable cost in operational complexity (mostly around how we do backups and restores). So far, it has been absolutely worth it in terms of observed external up-time.

Lessons learned

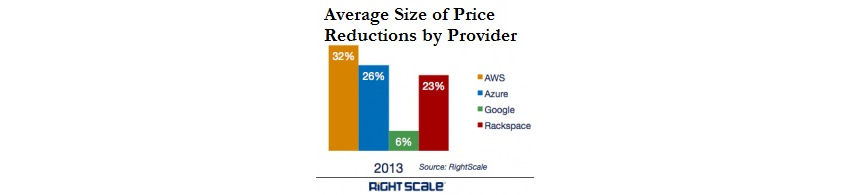

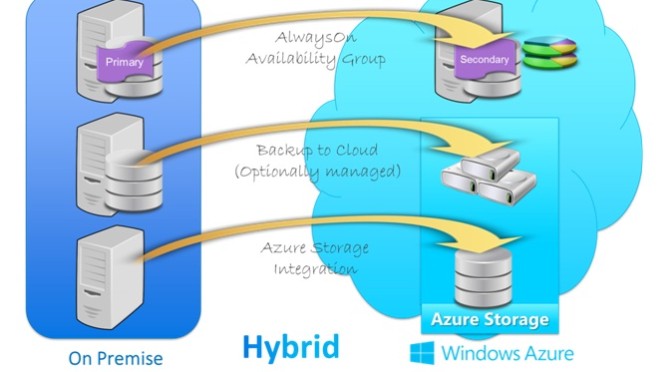

If we were starting awe.sm again tomorrow, I would use AWS without thinking twice. For a startup with a small team and a small budget that needs to react quickly, AWS is a no-brainer. Similar IaaS providers like Joyent and Rackspace are catching up, though: we have good friends at both those companies, and are looking forward to working with them. As we grow from over 100 to over 1000 boxes it’s going to be necessary to diversify to those other providers, and/or somebody like Carpathia who use AWS Direct Connect to provide hosting that has extremely low latency to AWS, making a hybrid stack easier.